The recent confirmation by Corning CEO Wendell Weeks that two unnamed hyperscale cloud providers have entered agreements exceeding the scale of the $6 billion Meta partnership signals a structural shift in the physics of data centers. While market observers focus on the headline dollar figures, the actual driver is a fundamental mismatch between GPU processing power and the physical transport layer. The traditional networking architecture has reached a saturation point where electron-based data movement consumes a disproportionate share of the power budget, forcing a transition to high-density optical connectivity at the rack level.

The Scaling Laws of AI Infrastructure

The move toward these massive "Lumen" platform deals is dictated by three technical constraints that render previous networking standards obsolete.

- The Radix Limitation: As AI clusters scale to hundreds of thousands of GPUs, the number of ports required to interconnect them grows non-linearly. To maintain low latency, the network must minimize "hops." High-fiber-count cables allow for a flatter network topology, reducing the need for intermediate switching layers that add cost and power draw.

- Thermal Scarcity: In a modern AI data center, the power density per rack has jumped from 10kW to over 100kW. Traditional copper cabling restricts airflow and creates significant heat through resistance. Optical fiber provides a passive, cool transport medium, shifting the thermal burden away from the interconnect.

- The Bandwidth-Distance Product: Copper's effective range at 800G and 1.6T speeds is measured in centimeters, not meters. This physical reality forces hyperscalers to use optical fiber for almost every connection longer than a few inches, making the fiber provider a "toll collector" for the AI era.

Quantifying the Hyperscale Pivot

The Meta agreement, valued at approximately $6 billion, established a baseline for what a Tier-1 AI build-out requires in terms of specialized fiber and connectivity hardware. The existence of two larger deals implies a total addressable market (TAM) expansion driven by the race for Artificial General Intelligence (AGI). These agreements are not merely purchase orders; they are capacity reservations for specialized glass manufacturing that few other firms can replicate.

The competitive advantage here is rooted in the "bend-insensitive" fiber technology. In the cramped environments of a generative AI cluster, fiber must be coiled and routed through tight spaces. Standard glass leaks light—and therefore data—when bent. Corning’s proprietary fiber allows for higher packing densities without signal degradation. For a hyperscaler, this translates directly to more GPUs per square foot of real estate.

The Margin Mechanics of Connectivity

The financial structure of these deals reveals a transition from a commodity-component model to a strategic-system model. Corning is no longer just selling bulk fiber by the kilometer; they are selling pre-terminated, high-density plug-and-play solutions. This shift alters the margin profile of the business in several ways.

- Engineering Integration: These $6B+ contracts involve co-designing the physical layer with the hyperscaler’s specific hardware stack. This creates a high switching cost, as the physical infrastructure of the data center becomes optimized for a specific connectivity architecture.

- Operating Leverage: Because the fixed costs of glass furnaces and chemical vapor deposition (CVD) processes are immense, these massive, multi-year commitments allow for maximum utilization of manufacturing assets. This drives down the unit cost while maintaining high ASPs (Average Selling Prices) for specialized products.

- Inventory De-risking: In the previous telecom cycle, Corning suffered from "lumpy" demand. The AI-driven hyperscale deals provide a multi-year visibility window that allows for disciplined capital expenditure.

The Three Pillars of Optical Dominance

To understand why hyperscalers are committing such vast sums, one must look at the specific components being deployed. The value is concentrated in three distinct areas.

High-Count Ribbon Fiber

Modern AI clusters require cables containing up to 6,912 fibers in a single sheath. Manufacturing this requires precise ribbonization techniques to ensure that all fibers can be spliced simultaneously, reducing installation time from weeks to days.

Small-Form-Factor Connectors

The physical space on the back of a switch or a server is finite. As bandwidth demands quadruple, the connectors must shrink. The development of MMC (Multi-fiber Multifunction Connector) and other high-density interfaces allows for 3x the density of traditional LC connectors.

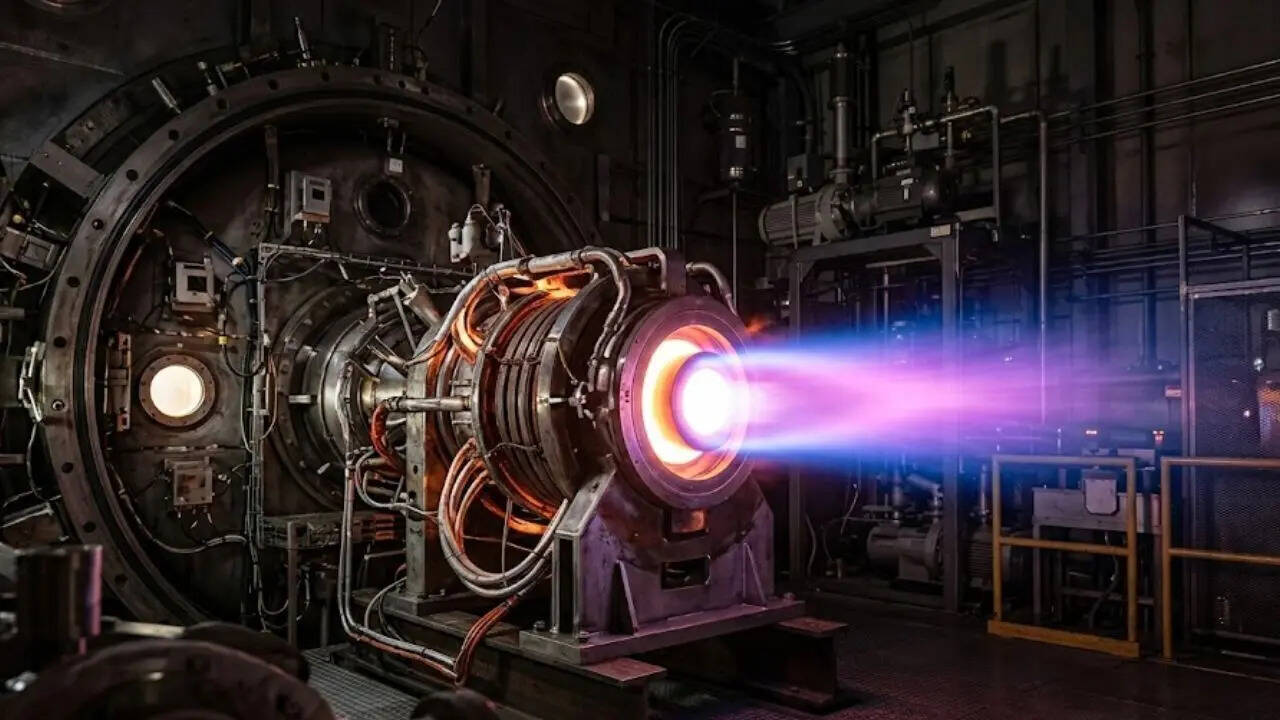

Optical Backplanes

Inside the server rack, the "midplane" or "backplane" is transitioning from copper traces on a circuit board to embedded optical waveguides. This is the final frontier of the optical transition, moving the light signal directly to the chip package (Co-Packaged Optics).

Structural Risks and Bottlenecks

Despite the bullish projections, several constraints could throttle the realization of these multi-billion dollar deals.

- Power Grid Interconnects: A hyperscaler may have the fiber and the GPUs, but if the local utility cannot provide 500MW of power to a single site, the deployment stalls. The bottleneck has shifted from data transport to energy procurement.

- Splicing Labor Shortages: Installing 6,000-fiber cables requires a highly skilled workforce. There is a global shortage of technicians capable of performing high-density fusion splicing at scale.

- The Silicon Photonics Wildcard: If competitors make a breakthrough in low-cost silicon photonics that allows for high-performance data transfer using cheaper, standard-grade glass, the premium commanded by specialized fiber could erode.

The Logic of the Unnamed Hyperscalers

While the identities of the "two unnamed hyperscalers" remain officially undisclosed, the capital expenditure profiles of Microsoft, Google (Alphabet), and Amazon (AWS) provide the only logical candidates.

Microsoft’s "Stargate" project—a rumored $100 billion AI supercomputer—would require a connectivity budget that dwarfs the Meta pact. Google’s vertical integration, including its custom TPU (Tensor Processing Unit) chips, relies heavily on proprietary optical switching (TPU v4/v5 use optical circuit switches), making them a prime candidate for a massive glass supply agreement. Amazon’s recent pivot toward custom AI silicon (Trainium/Inferentia) similarly necessitates a massive overhaul of its physical networking layer to keep pace with NVIDIA-based clusters.

Tactical Implications for Infrastructure Strategy

The primary takeaway for the broader industry is that the "AI trade" is moving from the silicon layer (NVIDIA) to the physical transport layer. The "Lumen" deals indicate that the biggest players in the world are de-risking their supply chains by locking up glass capacity years in advance.

The strategic play is to identify the secondary beneficiaries of this optical densification. As fiber counts explode, the demand for automated fiber management, specialized cooling systems for optical transceivers, and modular data center enclosures will scale in direct proportion to Corning’s glass shipments. The networking stack is being rebuilt from the ground up, and the foundation is increasingly made of silica.

The immediate priority for infrastructure investors and operators is to validate the power-to-fiber ratio in their upcoming builds. Any facility designed with traditional copper-to-top-of-rack architecture is already a legacy asset. Future-proofing requires an "all-optical" mindset where the physical fiber plant is treated as a 20-year strategic asset, not a 5-year consumable.